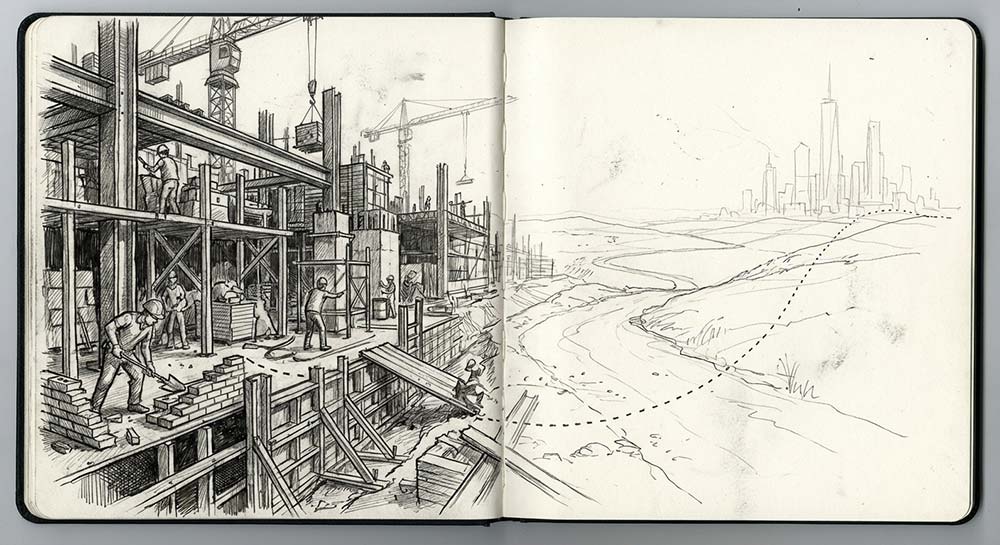

The software industry has long treated quality and speed as a zero-sum trade-off: we either move slowly to ensure excellence, or move quickly and embrace the mess. AI magnifies this tension—we can now generate UI, docs, tests, and scaffolding at a pace that would have sounded like science fiction a few years ago.

But we are still missing the point.

Speed was never the goal. Speed is a byproduct of how effectively we achieve an outcome, and quality is the foundation that allows for that flow.

You might think you know where I'm going with this, but quality was never the goal, either.

When quality becomes an end in itself, we drift into navel-gazing: fetishizing process, polishing abstractions, and optimizing the form of code over its function. Quality has a cost, and if that cost doesn't buy us faster learning, safer change, or better outcomes, it's not quality, it's overhead.

AI makes the choice of rigor unavoidable. It acts as a force multiplier for our existing process: it can compress our feedback loops or simply accelerate entropy. AI lowers the cost of outputs, which dramatically increases the cost of being wrong.

This is the heart of why AI should matter to us.

Three days to make the other mistake

I'm a speed addict. I need a lightning-fast feedback loop to keep me in flow. When I first started working with Justin Searls, I saw someone who shared that same hunger for speed but chased it down a completely different path: TDD, discipline, and constraints.

When I wanted to start coding, he would pull me back to driving the behavior with tests. I was skeptical. When I complained (which was often), Justin would offer a simple invitation: "Why don't we just try it for three days?"

It was the right amount of time. Long enough that I couldn't bail at the first sign of friction, but short enough that it didn't feel like a life sentence. I recall the moment it clicked for me, building a complex drag-and-drop table that had to work in IE6.

Justin challenged me to lean into the discomfort with constraints: no browser, no code, just express the behavior in tests first. I decided to try it. When we finally opened IE6 and the feature worked perfectly the first time, I realized I had found a different kind of speed: one built on verification.

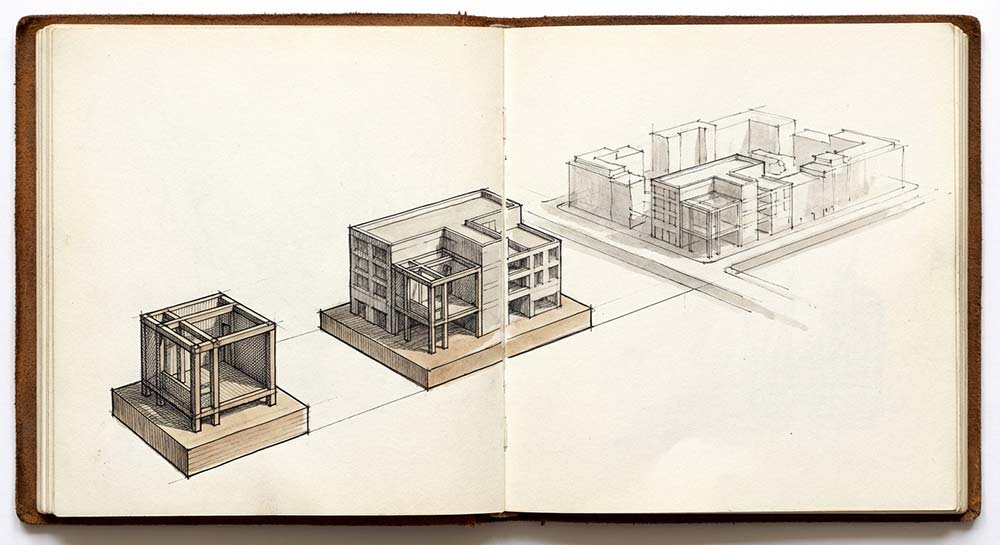

In retrospect, Justin was helping me "make the other mistake." Mark Rabkin describes this as the most effective way to move toward an ideal state. Instead of trying to course-correct with minor increments, we intentionally move to the opposite extreme to find where the ideal center actually lies.

Engineers know this instinctively. When debugging, nobody steps through commits one by one—at least not once we know about git bisect, which cuts the problem space in half with each iteration.

Speed addicts see themselves as "scrappy." Quality purists see themselves as "responsible." Each identity has a gravitational pull; small nudges aren’t enough to reach the escape velocity needed for lasting change. We have to be willing to overshoot. We have to be willing to make the other mistake.

That identity attachment scales from individuals to teams. Teams get trapped on one side of the quality-speed spectrum: they either ship fast and break things, or they become paralyzed by the pursuit of a "perfect" plan. When things go wrong, they try to reduce risk by making only small changes. But small adjustments don't threaten the team's identity, and identity is what keeps them stuck.

AI makes this more consequential. A speed-biased team can now generate misunderstood code, wrong abstractions, and avoidable debt at machine speed. Our leverage as engineers has shifted: if we don't have the discipline to lean into rigor, we are just accelerating entropy. The cost of being stuck at one extreme is much higher.

Not speed or quality. Not speed then quality. Speed and quality.

Hammers, screwdrivers, and meta-skills for AI development

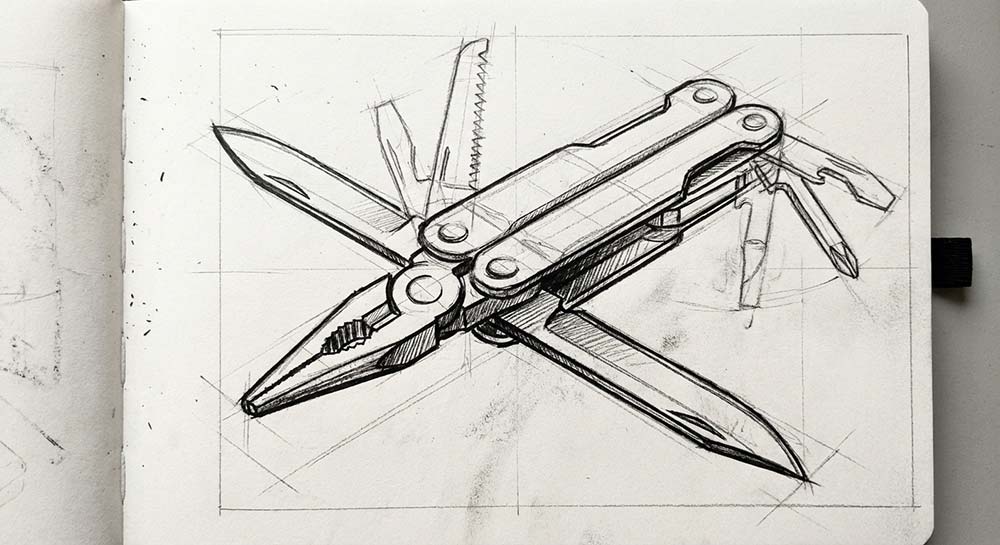

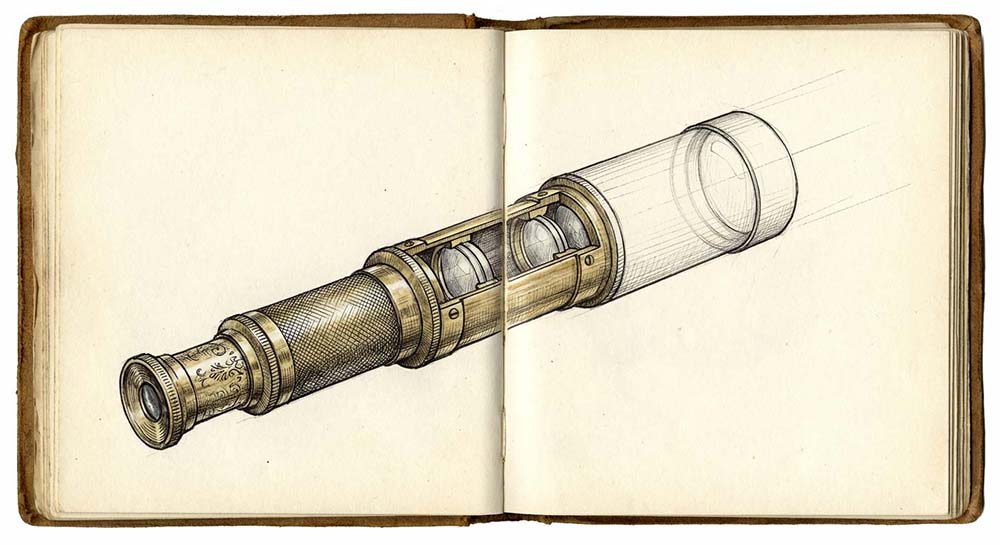

Justin often described his tools and techniques as either a "blunt instrument" or a "precision instrument"—and the same tool could be either one depending on how we wield it.

TDD is a perfect example. Used as a blunt instrument, TDD incentivizes the wrong behavior: chasing coverage metrics and writing tests to satisfy a graph. Focus drifts away from what actually matters: Does our testing strategy give us confidence to change the system? But wielded as a precision instrument, TDD becomes a discovery process.

I love how Annie Duke captures the "metaskill" of choosing between speed and quality in How to Decide:

“If you're putting together a dresser that comes with a set of screws, you could be tempted to use a hammer to save time if you don't have a screwdriver handy. Sometimes, a hammer will do an okay job, and it will be worth the time you save. But other times, you could break the dresser or build a shoddy health hazard. The problem is that we're just not good at recognizing when sacrificing that quality isn't that big a deal. Knowing when the hammer is good enough is a metaskill worth developing.”

It's tempting to apply this to AI by assuming we should let the LLM handle "blunt" tasks while we save the "precision" work for ourselves. That idea doesn't scale anymore; we're already the bottleneck. AI isn't just a blunt instrument. It can be a precision instrument too—and increasingly, it is a sophisticated multi-tool. The mode it operates in isn't determined by the task; it's determined by the quality of judgment we bring to bear.

Wield AI with vague intent, and you get blunt results regardless of the task. Wield it with precise inputs, clear constraints, and exacting taste, and it can operate as a precision instrument even on complex work.

Inputs, outputs, and outcomes: Why constraints matter

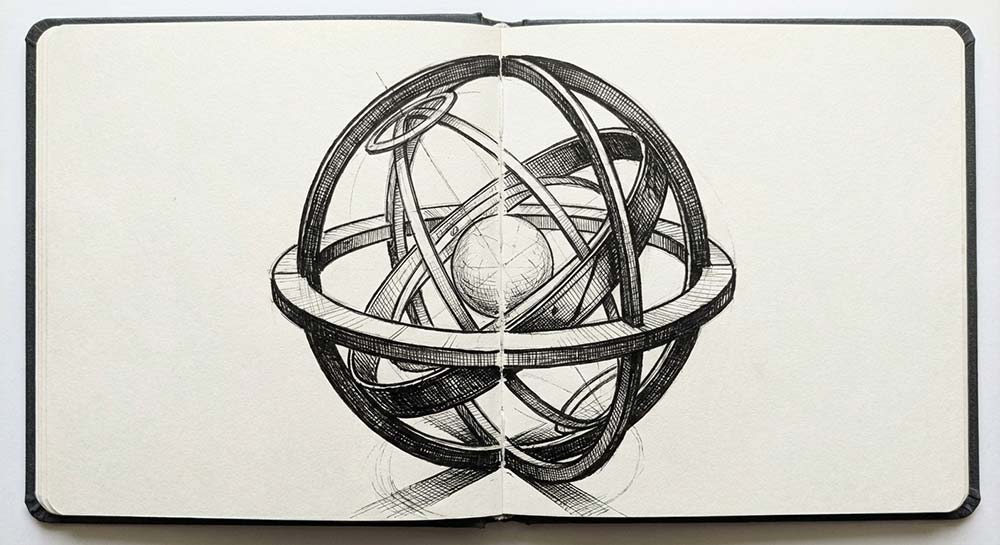

Pure functions are simple yet powerful building blocks that guarantee a predictable, reproducible result from a specific set of inputs. They are the bedrock of reliable systems because they eliminate hidden side effects.

A function that expresses the core insight of React is:

fn(state) -> uiFor a long time, we optimized for:

fn(tdd, xp, pairing, ci/cd) -> softwareWe assumed that if we got the "how" right, the "why" would naturally follow.

That function does improve software quality, but it misses a core product truth: a system can satisfy every purist definition of quality and still fail if it doesn't create product-market fit.

Conversely, we've all seen brittle, awkward software succeed because it solved the right problem at the right time. AI makes this gap impossible to ignore by commoditizing output. As the cost of generating software collapses, code can't be our only focus; it is just one parameter in a larger outcome function.

fn(software, market context, user need) -> outcomeIn this new world, our quality practices aren't just about making the code "good". They are the constraints that stop us from using AI to generate a mountain of "correct" code that solves the wrong problem.

Outputs are cheap. Outcomes never were.

The harness: Encoding taste and judgment into AI workflows

"Harness" is one of several labels that have emerged since 2022—prompt engineering, context engineering, harness engineering, and intent engineering. Different names, same underlying challenge.

We're no longer responsible only for producing code that fits our preferred architecture. We're responsible for designing the harness: clear boundaries, enforced standards, verification loops, and decision records that encode taste and judgment across sessions, even when the context window resets.

In practice, that means:

- Architecture boundaries and naming conventions

- Lint rules, types, and interfaces

- Tests and testing strategies

- Feedback loops and review workflows

- Decision logs and system observability

Specific tools matter less. The model, the IDE, and the framework are no longer the primary differentiators. The harness we build, and our ability to build it, are what differentiate us.

At Test Double, we’ve been in the business of optimizing for feedback loops, verification, and confidence for years. With AI, the problems and the optimizations are the same. The only thing that's changed is the medium.

Leading and lagging indicators of outcome quality

Before AI, the industry was already shifting toward a blend of product and engineering disciplines often called Product Engineering, which moves us upstream to a higher-leverage starting point: asking "Why will this move the needle?" not just "How do we build this spec?"

That shift accelerated for us with the acquisition of Pathfinder, which brought more product-focused practitioners into our world—people whose instinct was to ask "What outcome are we actually driving toward?" before writing code.

Our core lesson has been that outcome quality is not something we should defer evaluating until post-launch.

Yes, the strongest signals of outcome quality are lagging indicators: adoption, revenue, reliability, and customer satisfaction. But on an in-flight project—especially one augmented by AI—there are real and meaningful leading indicators:

- Trust in the team: Are stakeholders confident enough to let the team pivot based on what they're learning?

- Decision clarity: Is the "why" behind an architecture choice explicit, or are we just following a default?

- Reduced decision churn: Are we resolving ambiguity faster than we are generating new code?

- Honest planning: Are assumptions big and visible, or are they buried in a PR marked "completed"?

These are not "soft" metrics. They are the conditions that make high-quality product outcomes possible. They are the signals that the harness is working.

What’s next for Test Double

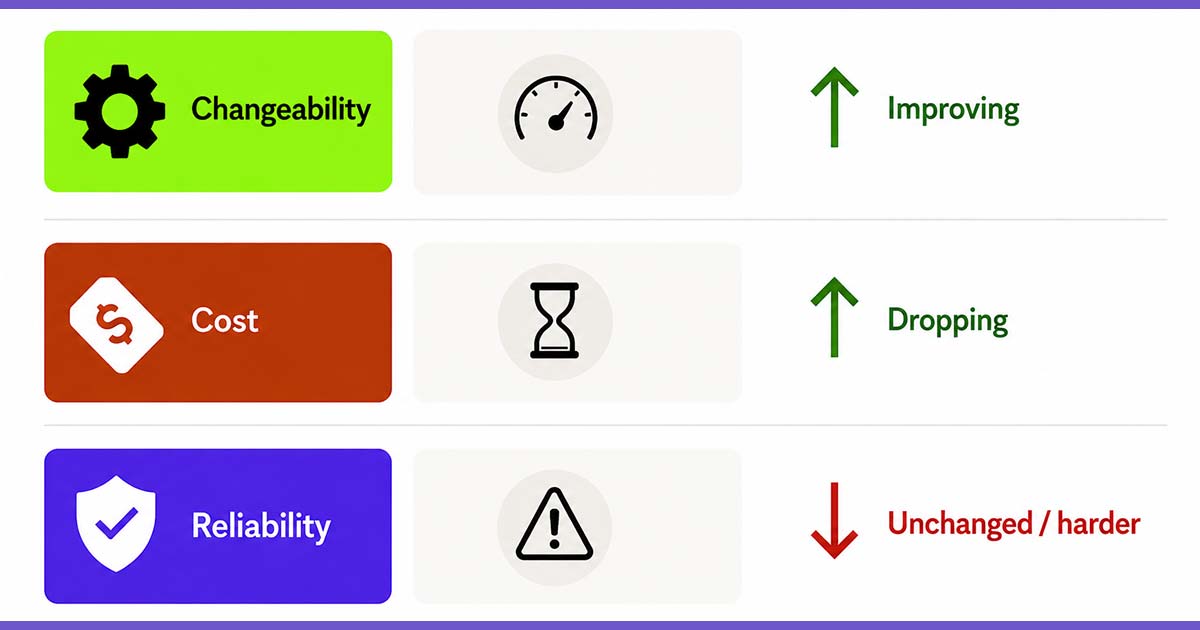

Despite being named Test Double, our heritage is not “we like tests.” We care about feedback loops, clarity, ease of change, and minimizing unintended consequences. In the pre-AI era, that often showed up as code-centric rigor because the production of code dominated the cost structure of software consulting.

In the AI era, code is cheap. Ambiguity and a bad plan are a lot more expensive.

AI commoditizes output generation. It does not commoditize taste, judgment, or accountability for outcomes. Our leverage is shifting from code to the quality of the harness we build around the systems we shape. Outcome quality has to sit at the top of our value hierarchy.

If we align our priorities this way, speed will show up as a byproduct, not because we demanded it or pushed harder on execution, but because we build systems designed for validated learning, adaptation, and catching drift before it compounds.

All of that is a much higher bar than code generation.

Good.

We've always been at our best when the bar is high.

Dave Mosher is a Principal Consultant at Test Double, and has experience in legacy modernization, agentic coding, and explaining CORS poorly to people who didn't ask.